In the daily operation of weather forecasts, powerful supercomputers are used to predict the weather by solving mathematical equations that model the atmosphere and oceans. In this process of numerical weather prediction (NWP), computers manipulate vast datasets collected from observations and perform extremely complex calculations to search for optimal solutions with a dimension as high as 108. The idea of NWP was formulated as early as 1904, long before the invention of the modern computers that are needed to complete the vast number of calculations in the problem. In the 1920s, Lewis Fry Richardson used procedures originally developed by Vilhelm Bjerknes to produce by hand a six-hour forecast for the state of the atmosphere over two points in central Europe, taking at least six weeks to do so. In the late 1940s, a team of meteorologists and mathematicians lead by John von Neumann and Jule Charney made significant progress toward more practical numerical weather forecasts. By the mid-1950s, numerical forecasts were being made on a regular basis (earthobservatory.nasa.gov and Wikipedia.org).

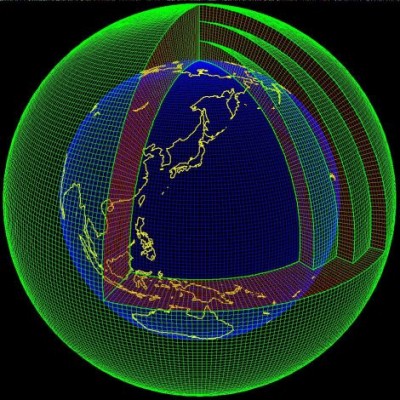

Several areas of mathematics play fundamental roles in NWP, including mathematical models and their associated numerical algorithms, computational nonlinear optimization in very high dimension, huge datasets manipulation, and parallel computation. Even after decades of active research with the increasing power of supercomputers, the forecast skill of numerical weather models extends to about only six days. Improving the current model and developing new models for NWP have always been an active area of research. Operational weather and climate models are based on Navier-Stokes equations coupled with various interactive earth components such as ocean, land terrain, and water cycles. Many models use a latitude–longitude spherical grid. Its logically rectangular structure, orthogonality, and symmetry properties, make it relatively straightforward to obtain various desirable, accuracy-related, properties. On the other hand, the rapid development in the new technology of massively parallel computation platforms constantly renew the impetus to investigate better mathematical models using traditional or alternative spherical grids. Interested readers are referred to a recent survey paper in Quarterly Journal of the Royal Meteorological Society (Vol. 138: 1-26).

Initial conditions must be generated before one can compute a solution for weather prediction. The process of entering observation data into the model to generate initial conditions is called data assimilation. Its goal is to find an estimate of the true state of the weather based on observation (e.g. sensor data) and prior knowledge (e.g. mathematical models, system uncertainties, and sensor noise). A family of variational methods called 4D-Var is widely used in NWP for data assimilation. In this approach, a cost function based on initial and sensor error covariance is minimized to find the solution to a numerical forecast model that best fits a series of datasets from observations distributed in space over a finite time interval. Another family of data assimilation methods is ensemble Kalman filters. They are reduced-rank Kalman filters based on sample error covariance matrices, an approach that avoids the integration of a full size covariance matrix, which is impossible even for today’s most powerful supercomputers. In contrast to interpolation methods used in the early days, 4D-Var and ensemble Kalman filters are iterative methods that can be applied to much larger problems. Yet the effort of solving problems of even larger size is far from over. Current day-to-day forecasting uses global models of grid resolutions between 16 – 50 km and about 2 – 20 km for short period local forecasting. Developing efficient and accurate algorithms of data assimilation for higher resolution models is a long-term challenge that will face mathematicians and meteorologists for many years to come.

Wei Kang

Naval Postgraduate School

Monterey, California